Why you shouldn't be surprised about Prime Video move from serverless

Man, you all get crazy really easy

Since last week, LinkedIn and some other channels are on fire about a post made by Prime Video team where they say that they moved from serverless to a monolith running on ECS.

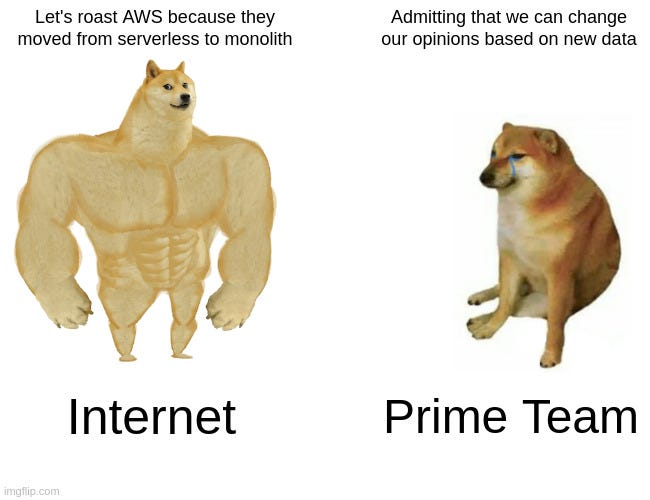

Some people are saying that “Even Amazon can’t make sense of microservices” with a bias against microservices, YouTubers doing clickbait videos (I have a hope that clickbait videos will die in the void), and people that uses serverless feeling attacked by Prime Video choice.

The feeling that I have after reading some posts is that people may think that AWS Engineers can’t make wrong decisions. Oh come on. We are grown-ups, and occasionally, we need to admit that we made wrong choices, and now we are evaluating the current status of our workload and the learnings to make better decisions moving forward.

Let’s just interpret some parts of the post:

The two most expensive operations in terms of cost were the orchestration workflow and when data passed between distributed components.

That is the part where they acknowledge the problem. The problem was that transferring the data between the threads was higher than the cost of actually processing the data. It is something that we may not realize in our daily activities on AWS, where the data transfer between applications is where your budget really starts to get expensive.

We designed our initial solution as a distributed system using serverless components (for example, AWS Step Functions or AWS Lambda), which was a good choice for building the service quickly. In theory, this would allow us to scale each service component independently

Let’s think for a minute here: They had a plan, some business domains, and though that going with serverless would allow them to build the service quickly. What they may have done wrong was to not make proof of concepts to validate the idea that serverless would deliver everything that they were expecting. So, they build it thinking on decoupling and orchestrating things using Lambda and Step Functions.

Moving on.

Now after a couple of months (or years) with the product running, they have their domains clear as stated here:

Our service consists of three major components. The media converter converts input audio/video streams to frames or decrypted audio buffers that are sent to detectors. Defect detectors execute algorithms that analyze frames and audio buffers in real-time looking for defects (such as video freeze, block corruption, or audio/video synchronization problems) and send real-time notifications whenever a defect is found. The third component provides orchestration that controls the flow in the service.

I can already see that the operation overhead of doing this with AWS Step Functions would grow consistently as stately later about the costs that using Step Functions achieved.

To address the bottlenecks, we initially considered fixing problems separately to reduce cost and increase scaling capabilities. We experimented and took a bold decision: we decided to rearchitect our infrastructure.

Well, in this part, I don’t agree that the decision to rearchitect was a bold decision. It was lessons learned from the past and the right call for the current situation that they had.

The conclusion was that, in the end, after all things said and done, they reduced 90% of the costs of their infrastructure by moving from a serverless architecture to a monolith system.

And that’s all right. Today they decided to move to a monolith. Tomorrow they may move to another set of services(a mix of compute and serverless), and it’s OK. It’s just engineering decisions made with the data and knowledge that we have today.

The post from them is not a reason to attack microservices in favor of monoliths. Or attack serverless in favor of containers. All architectural solutions have its fit in the right context. You just need to be a grown up to admit that and realize, as everything in tech: it depends.