RANT: GitHub Actions is not Good, it's far from it

With my first job experience, I was working using GitLab as repository for ours projects and its CI/CD. At my second job I was also lucky to continue working with GitLab and I practically mastered the use of its CI/CD.

After that, I worked in a company that used GitHub and Travis CI, and the couple projects that I had to set up using it made me almost sick of how bad Travis CI is. I needed a ton of boilerplate to do simple stuff. Luckily, I moved out to a new job where we still use GitHub, but now we are moving from CircleCI to GitHub Actions.

And GitHub Actions is a bit better than Travis CI. But both are light year far from GitLab CI/CD. Way far.

GitLab CI/CD gives you a one view of your whole pipeline, in one single click. You can easily reuse jobs using YAML Anchors or even doing a GitLab CI templates. It's possible to have separated CI files in one repository and import them in the main CI file. You can trigger jobs automatically or manually. You can skip jobs based on changes in folders. Cache and artifacts are one line to be configured.

Now let's see GitHub Actions. So far, I can't set up a step to be trigger manually. I can configure jobs to be triggered that using workflow dispatch, but I need 4 clicks to trigger the job. While on GitLab, I can add when: manual to a job and that's it. I can go to my pipeline workflow and click on the job trigger. If I want to use cache or artifact, I need to use GitHub official actions like:

- name: Cache pip

uses: actions/cache@v2

with:

path: .venv

key: ${{ runner.os }}-pip

- name: Upload zip

uses: actions/upload-artifact@v2

with:

name: layer

path: layer.zip

retention-days: 1While on GitLab:

build_package:

stage: build

script:

- echo Hello World

artifacts:

paths:

- lambda.zip

expire_in: 7 days

cache:

paths: [.node_modules]

For the sake of clarity, I am biased towards GitLab. But I can't wrap my mind on how I need to use code from outside my CI to setup basic stuff like cache and artifacts. And that without counting on how you need to configure your runner with python or nodejs using an action for that:

- name: Set up Python

uses: actions/setup-python@v2

with:

python-version: 3.9While on GitLab and can go directly to use a Docker image if I feel like it:

build_package:

image: node:latestGitHub Actions also support running jobs inside containers, but you need to make your whole job to run in a container, so if you need different environments, you need to write another jobs with the subsets of the steps that you need, while on GitLab each step can run in any env that you feel like it.

And I think that this is the huge limitation that GitHub Actions has. I can't see GitHub Workflows as DRY. I constantly see myself copy & pasting code, even when I try to simplify using the reusing workflows feat. For different environments like production and staging, I need to do different workflows files with 99% the same set-up but with different triggers (like push on main or tags). The triggers are on workflow level and not on step level. So if you need for example to skip a step, you need to use environment vars and conditionals to skip it.

For example, let's see how I'm currently deploying a docker image to ECR using GitHub Actions:

The following snippet is a Reusable workflow that I've written to build and push a Docker image to AWS ECR:

name: Build and push docker image to aws

on:

workflow_call:

inputs:

dockerfile_name:

description: Name of the dockerfile

required: false

type: string

default: Dockerfile

static_tag:

description: Static tag for image like latest, development, etc

type: string

required: false

default: latest

image_tag:

description: Custom tag for image

type: string

required: true

secrets:

AWS_ACCESS_KEY_ID:

description: AWS access key id

required: true

AWS_SECRET_ACCESS_KEY:

description: Aws secrets access key

required: true

ECR_REPOSITORY:

description: Name of the docker repository

required: true

jobs:

docker:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2

- name: Configure AWS credentials

uses: aws-actions/configure-aws-credentials@v1

with:

aws-access-key-id: ${{ secrets.AWS_ACCESS_KEY_ID }}

aws-secret-access-key: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

aws-region: us-east-1

- name: Login to Amazon ECR

id: login-ecr

uses: aws-actions/amazon-ecr-login@v1

- name: Build, tag, and push image to Amazon ECR

env:

ECR_REGISTRY: ${{ steps.login-ecr.outputs.registry }}

ECR_REPOSITORY: ${{ secrets.ECR_REPOSITORY }}

IMAGE_TAG: ${{ inputs.image_tag }}

TAG: ${{ inputs.static_tag }}

run: |

docker build -t $ECR_REGISTRY/$ECR_REPOSITORY:$IMAGE_TAG -t $ECR_REGISTRY/$ECR_REPOSITORY:$TAG -f ${{ inputs.dockerfile_name }} .

docker push $ECR_REGISTRY/$ECR_REPOSITORY --all-tags

Here's how I use it:

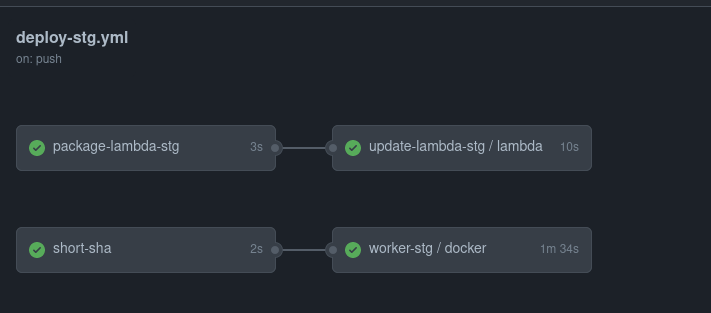

name: Deploy Staging

on:

push:

branches:

- "main"

jobs:

short-sha:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2

- name: Short sha

id: short-sha

run: echo "::set-output name=sha_short::$(git rev-parse --short HEAD)"

outputs:

sha_short: ${{ steps.short-sha.outputs.sha_short }}

worker-stg:

needs: short-sha

uses: docker-build-and-push-with-private-deps.yml@main

with:

dockerfile_name: Dockerfile.worker

image_tag: staging-${{ needs.short-sha.outputs.sha_short }}

secrets:

ECR_REPOSITORY: ${{ secrets.ECR_REPOSITORY }}

AWS_ACCESS_KEY_ID: ${{ secrets.STG_AWS_ACCESS_KEY_ID }}

AWS_SECRET_ACCESS_KEY: ${{ secrets.STG_AWS_SECRET_ACCESS_KEY }}

SSH_KEY: ${{ secrets.SSH_KEY }}57 of template + 28 lines of setup = 85 lines in total

Now let's see how that works using GitLab CI/CD:

# This template builds and publish a docker image on a given

# repository on AWS

.docker: &docker

image: docker:stable

services:

- docker:stable-dind

script:

- echo instal aws-cli

- apk add --no-cache python3 py3-pip

- pip3 install awscli

- echo login aws for push

- aws ecr get-login-password --region us-east-1 | docker login --username AWS --password-stdin $ECR

- echo build image and push

- docker build -t $REPO:$IMAGE_TAG -t .

- docker push $REPO

# Job definition examples

docker_dev:

extends: .docker

stage: build

variables:

IMAGE_TAG: dev-$CI_COMMIT_SHORT_SHA

REPO: $DEV_ECR_ACCOUNT/$SERVICE

AWS_ACCESS_KEY_ID: $DEV_AWS_ACCESS_KEY_ID

AWS_SECRET_ACCESS_KEY: $DEV_AWS_SECRET_ACCESS_KEY

when: on_success

allow_failure: false

12 lines of template + 10 lines of setup = 22 lines on total

To deploy the Docker image for different environments, let's say, development, staging and production, I need at least 3 files with the same code but different job triggers for GitHub Actions. With GitLab, I can use the lines of the previous snippet (19-28) with different when triggers. And this post is without mentioning the stages feature that GitLab has that makes the management of the pipeline clean and easier. Oh gosh, how I miss GitLab.

And also, just WHY: Why GitHub Actions doesn't merge workflows with the same names if the workflow file changed its name? They don't know refactor? And so far I can't delete old workflows that I don't use anymore without deleting one by one the jobs on it.

Last but not least

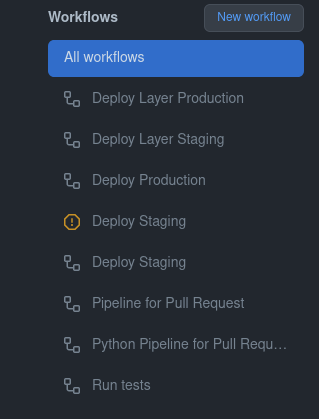

Here are the workflows for my last project. Each workflow has different triggers and I often get confused on which one I should look to see if it is working properly. Ah, and I can't have dependencies between workflows, which also sucks. Both the Layer and Deploy workflows are 99% equal, just with different triggers and environment setup. This copy & paste is awful. I can only set dependencies between jobs in the same workflow.

The reason of this post is that I just miss this view:

If you have any ideas on how I can improve the GitHub Actions workflow, feel free to reach me on Twitter or Telegram at lays147. Otherwise, that's all, folks!